How does human cooperation emerge from evolution and learning?

Human cooperative behavior is one of the central puzzles in biology and the social sciences. This page treats cooperation as a two-timescale problem: some cooperative tendencies are shaped across generations by natural selection, and some are acquired within a lifetime through learning.

-

Nature → Evolving cooperation over generations by natural selection

-

Nurture → Learning to cooperate within a lifetime

What cooperation means here

In this project, cooperation means behavior that aligns with other actors through accommodation, support, or shared coordination rather than obstruction or opposition. For the fuller definition and the boundary with adversarial behavior, see What is Cooperation? and What is Adversarial Behavior?.

Why cooperation is a puzzle

Cooperation is easy to observe, but hard to explain. In many environments, individual incentives and collective outcomes pull in different directions. The same behavior may look cooperative at one timescale and exploitative at another.

-

Individual and collective interests often diverge in the short run.

-

Repeated interaction, memory, and expectation matter, so behavior depends on history rather than only on the present moment.

-

Ecological structure changes what cooperation costs, what it returns, and who benefits from it.

-

Some behavioral capacities are inherited, while specific strategies are still learned during life.

Any serious explanation of cooperation therefore has to account for both fast and slow adaptation: how agents change within a lifetime, and how populations change across generations.

Nature and nurture as intertwined sources of cooperation

Most research has focused either on evolutionary explanations for the emergence of cooperation or on learning-based explanations in isolation. Yet in natural systems, cooperation emerges from their interaction across two timescales.

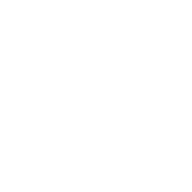

Human cooperative behavior can be understood as present-day action running on ancestral hardware. Its origins span multiple timescales, from evolutionary changes millions of years ago to learning processes unfolding fractions of a second ago.

Display 1 frames the central problem of the site: cooperation is shaped by what evolution builds into agents and by what those agents later learn from local interaction. If either side is removed, the explanation becomes incomplete.

Rather than prescribing cooperative behavior through direct engineering, this project asks under which minimal constraints cooperative behavior emerges and persists in a multi-agent ecosystem. Nature and nurture are treated here as dynamically coupled processes rather than separate explanatory boxes.

| Dimension | Nature | Nurture |

|---|---|---|

| Timescale | Generations | Lifetime |

| Adaptive process | Selection | Learning |

| What changes | Inherited tendencies | Policy and behavior |

| Main signal | Fitness | Reward and experience |

| Core question | Which traits spread? | What does an agent learn to do? |

Display 2 gives the broad nature-versus-nurture split. The next section shows why that split matters for plasticity.

Plasticity: the missing link between evolved and learned cooperation

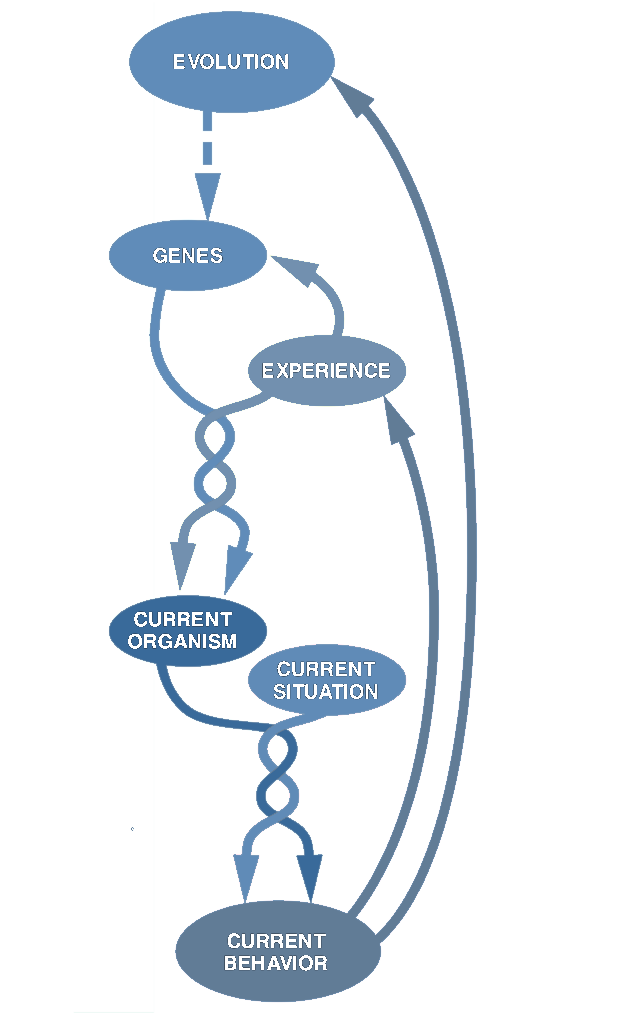

Plasticity is the bridge between nature and nurture: evolution shapes the capacity to adapt, and learning uses that capacity to produce behavior. Here, it means an inherited capacity to adjust behavior in response to local conditions, social feedback, and accumulated experience.

Plasticity is neither learning itself nor a fixed instinct. It is the machinery that makes learning possible: how quickly agents update from experience, how much they remember, how strongly they react to reward or punishment, and how readily they revise trust after cooperation or betrayal. Evolution therefore does not need to hard-code a single cooperative rule such as "always help" or "never trust strangers." Instead, it can shape the capacities that make different responses easier, harder, faster, or slower to learn.

Consider a person entering a new workplace team. They do not arrive with a fully fixed cooperative script. They bring mechanisms for attending to reputation, remembering earlier exchanges, and updating expectations about others. If teammates share work fairly and return favors, that person is likely to become more open and cooperative over time. If teammates free-ride or exploit helpful behavior, the same person may become more cautious. What is inherited is not the exact final strategy, but the capacity to adjust strategy from experience.

A familiar analogy comes from language acquisition. Chomsky argued, first against behaviorist accounts in 1959 and then more systematically in 1965, that children are not born already speaking a particular language, but with an innate capacity for language that develops through environmental input. The same logic applies here: humans may not be born with one fixed cooperative strategy, but with capacities that allow cooperation to be shaped by experience.

Display 3 is the conceptual hinge of the page. Evolution does not need to encode a fixed cooperative act directly. Instead, it can shape the architecture through which cooperation later becomes easier, harder, faster, or slower to learn.

The next display zooms in on plasticity itself: the specific learning machinery that evolution can tune and learning can use.

| Plasticity parameter | What evolution tunes | What learning uses it for |

|---|---|---|

| Learning rate | How quickly a policy can update from experience. | How quickly behavior shifts when cooperation starts to pay off. |

| Memory capacity | How much past interaction can be retained in the learning system. | How much earlier cooperation, defection, or reward history can still influence current action. |

| Exploratory bias | How much variation is available for trying new behavior. | How readily an agent tests new cooperative strategies or role patterns. |

| Social-feedback sensitivity | How strongly selection can favor responsiveness to cues from others. | How strongly praise, punishment, reputation, or reward alter current behavior. |

| Robustness under changing environments | How well plasticity can remain useful when ecological conditions shift. | How well cooperation can persist when partners, costs, or opportunities change. |

Why the feedback loop matters

The relationship does not stop there. Learning changes ecological structure, ecological structure changes selection pressures, and selection changes which forms of plasticity persist.

-

Evolution shapes learning capacities.

-

Learning reshapes ecological structure.

-

Ecological structure changes which traits selection favors.

Plasticity closes that loop. In unstable environments, high plasticity may be favored because it supports rapid adjustment. In stable environments, lower plasticity may be favored because it reduces cost and preserves reliable behavior.

Research tools and questions

Tools This project studies both with Artificial Intelligence (AI) and Agent-Based Modeling (ABM).

Questions

-

Under which ecological conditions does human cooperation emerge at all?

-

When should selection favor fixed cooperative tendencies, and when should it favor plasticity?

-

When does learning stabilize cooperation, and when does it undermine it?

-

How do repeated interaction, population structure, and resource dynamics change the answer?

-

Can cooperative behavior emerge from minimal rules without being explicitly engineered?

Methodology

The limits of verification in evolutionary research

A fundamental constraint runs through all research on the evolution of cooperation: it is not possible to prove that any particular mechanism was causally responsible for the emergence of cooperative behavior in human evolutionary history. Evolutionary history is unrepeatable. The selection pressures, population structures, and ecological conditions that shaped human behavior over millions of years cannot be reconstructed with sufficient precision to support the kind of controlled, statistical verification that would settle causal claims. This is sometimes called the historical sciences problem — the evidential record is always incomplete, events branch contingently, and retrospective inference is underdetermined no matter how rich the comparative or archaeological data. Kinship, reciprocity, reputation, punishment, and group selection are all plausible candidates, but demonstrating that any one of them was necessary for human cooperation to emerge, rather than merely consistent with it, lies beyond what current evidence can deliver.

From historical proof to predictive modeling

Given this constraint, the most defensible scientific strategy shifts from verification of past causes to prediction of present behavior. A model earns credibility not by reconstructing what happened but by generating specific, falsifiable predictions about what should be observable in human cooperative behavior today — and under which conditions those patterns should change. This is the standard this project holds itself to: models should make ex ante predictions that can be tested against controlled laboratory experiments, field data, or cross-cultural comparisons of cooperative behavior. The verification happens in the present, even if the mechanisms being modeled operate across evolutionary time.

The tension between abstraction and behavioral fit

This creates a genuine methodological tension. On one side, abstract models with few prior assumptions have the greatest inferential discipline. When cooperation emerges from a model that assumes very little about human nature, the result is informative precisely because little was smuggled in. Axelrod's tournament models and Nowak's five mechanisms work this way: their value comes from parsimony. On the other side, a model that closely mirrors known features of human behavior — social preferences, reputation, language, institutions, cultural norms, and group structure — may fit observed patterns well but explain less, because part of the explanation has already been built into the assumptions. This is the overfitting problem in a theoretical register: a model calibrated to reproduce what we already know about human cooperation has fewer degrees of freedom left to surprise us. It becomes descriptively rich but explanatorily constrained.

A dual modeling strategy

To navigate this tension, this project pursues two modeling approaches in parallel rather than choosing between them.

The first approach is a minimal generative model. It starts with agents that have no pre-specified social preferences, interact under simple ecological rules, and possess only the capacity to learn from local experience. From this sparse starting point, the goal is to build upward: add structure only when its absence prevents the emergence of cooperation or produces behavior that is clearly unlike the target phenomenon. This approach is theoretically disciplined because every added assumption has to earn its place. Its weakness is practical: a model can be elegant and still fail to generate the kinds of cooperative and competitive behavior that human societies actually display.

The second approach is a behaviorally anchored model. It starts from a richer description of human cooperation: agents endowed with cognitive, social, and ecological capacities that are strongly associated with human social life, especially in small-scale foraging societies where human cooperation operated for most of our species' history. These include repeated interaction, reputation sensitivity, norm enforcement, kinship or coalition structure, role differentiation, social learning, and shared access to resources. From this detailed starting point, the goal is to simplify downward: identify which assumptions can be removed without destroying recognizably human cooperative outcomes. Its strength is practical behavioral fit. Its weakness is theoretical risk: if too much human behavior is assumed in advance, the model may reproduce cooperation without explaining its emergence.

The logic of running both approaches is that convergence is more trustworthy than single-model conclusions. If the minimal generative model and the behaviorally anchored model produce overlapping predictions about when cooperation stabilizes and when it breaks down, those predictions do not depend on one particular set of assumptions. Where the two approaches diverge, the divergence itself is informative: it identifies which assumptions are doing the explanatory work. The richer model can also guide the sparse model by showing which missing capacities may be necessary, while the sparse model disciplines the richer one by testing whether those capacities are truly needed.

Computational experiments as a substitute for natural experiments

Because evolutionary history cannot be replayed and the conditions that shaped human cooperation cannot be held constant in the field, this project uses computational experiments to substitute for natural ones. Agent-based simulations and reinforcement learning environments allow conditions to be set precisely, varied one parameter at a time, and replicated across thousands of runs. This makes it possible to ask causal questions — does cooperation increase when relatedness increases, or when memory extends, or when group size shrinks — under controlled conditions that field observation cannot provide. The predictions those experiments generate are then the empirical targets: patterns that should appear in human cooperative behavior if the model captures something real, and whose absence would count against it.

Why AI and agent-based models?

If cooperation depends on both lifetime learning and longer-run selection, then the research tools need to represent both timescales at once. That is why AI and agent-based modeling enter the picture here. They do not appear as add-ons to the argument; they follow from the structure of the problem itself.

-

Reinforcement learning provides a concrete model of plasticity within lifetimes: how agents update behavior from reward, punishment, memory, and repeated interaction.

-

Agent-based modeling provides a concrete model of ecology: who interacts with whom, how often they meet again, how resources flow, and how population structure shapes incentives.

-

Together they make it possible to study how individual adaptation scales up into collective patterns such as trust, reciprocity, free-riding, or stable cooperation.

-

They also allow systematic comparison across conditions, helping us ask when cooperation is fragile, when it stabilizes, and when selection should favor more or less plasticity.

Where to go next

-

What is Cooperation? gives the broad definition used throughout the site.

-

What is Adversarial Behavior? explains the main opposing category.

-

Cooperation in Perspective: places cooperation within the broader landscape of human behavior and clarifies what makes it a distinct behavioral pattern.

-

Evolved Cooperation: asks how cooperative tendencies can spread across generations through evolution.

-

Learned Cooperation: asks how cooperative behavior can be acquired within a lifetime through adaptation and experience.

-

Interaction Evolved-Learned Cooperation: connects those two timescales and explores how evolution and learning interact.

References

- Chomsky, N. (1959). Review of B. F. Skinner's Verbal Behavior. Language, 35(1), 26-58. https://doi.org/10.2307/411334

- Chomsky, N. (1965). Aspects of the Theory of Syntax. Cambridge, MA: MIT Press. https://mitpress.mit.edu/9780262527408/aspects-of-the-theory-of-syntax/